What's the best way to market your law firm in 2026?

Well, it depends who you ask.

Some lawyers might tell you to stick to traditional marketing strategies, while others have gone digital.

We've been helping law firms get more leads and clients for over 10 years, there's one marketing strategy that beats all...

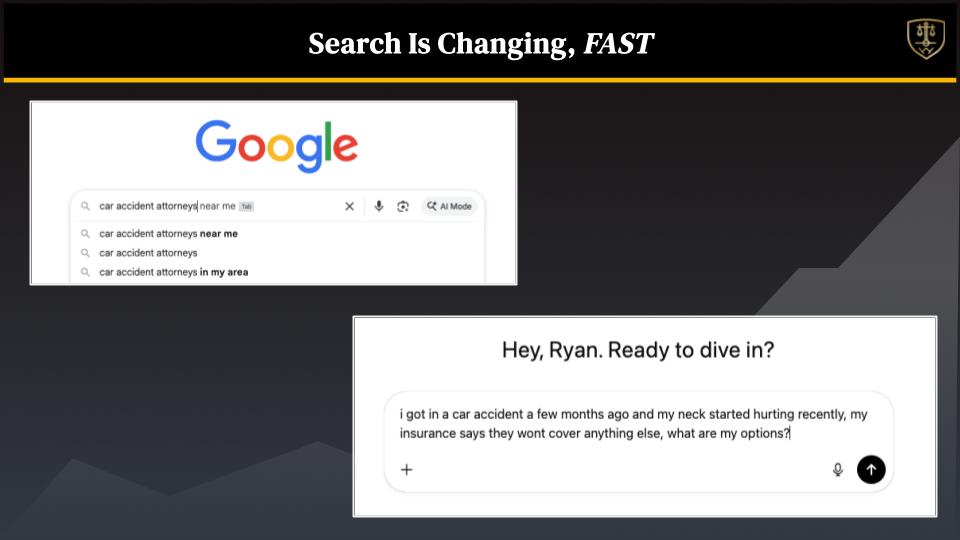

Search engine optimization (SEO).But in 2026, the rules of SEO have changed.

AI search is here and it needs to be accounted for in your strategy.

After all, SEO stands for "Search Engine Optimization", not Google Optimization - that means all types of search engines need to be accounted for.

In this post, I will break down the best SEO strategy for law firms that is guaranteed to increase your organic rankings in Google.

Why SEO vs other marketing channels for lawyers?

First, let's analyze why other marketing tactics might not be as good as a fit as SEO.Google PPC Ads for lawyers are costly

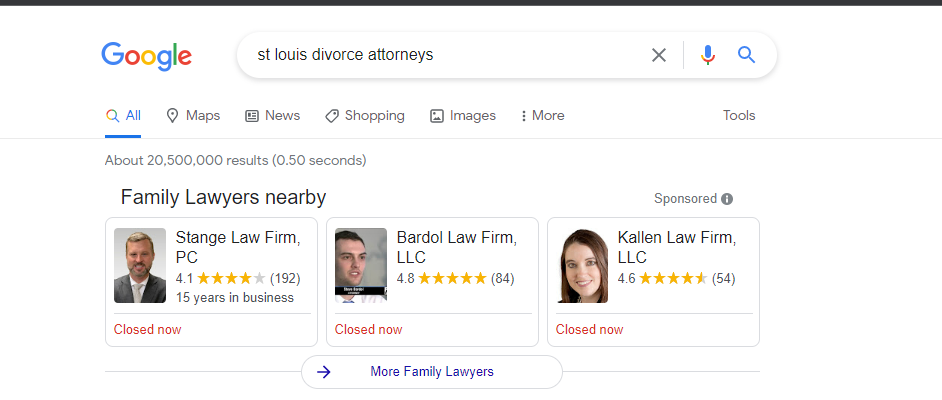

In case you're new, paid search (PPC Ads) work like this...Someone types in a query searching for a law firm, and search results appear with ads at the top.

Each time someone clicks on your ad, you pay $X (the amount depends on how competitive the key phrase is). For example, if you're targeting St. Louis divorce attorney and it's $10.50/click, you'll easily spend hundreds or even thousands weekly for leads.

But is it worth it?

If you've got the money to spend and the right strategy, yes.

But if you're tired of spending tens of thousands on ads and struggling to generate and/or close the leads, then most definitely not.

DO PPC RIGHT: Complete guide to Pay Per Click Ad campaigns for lawyers

Social media ads require a lot of work

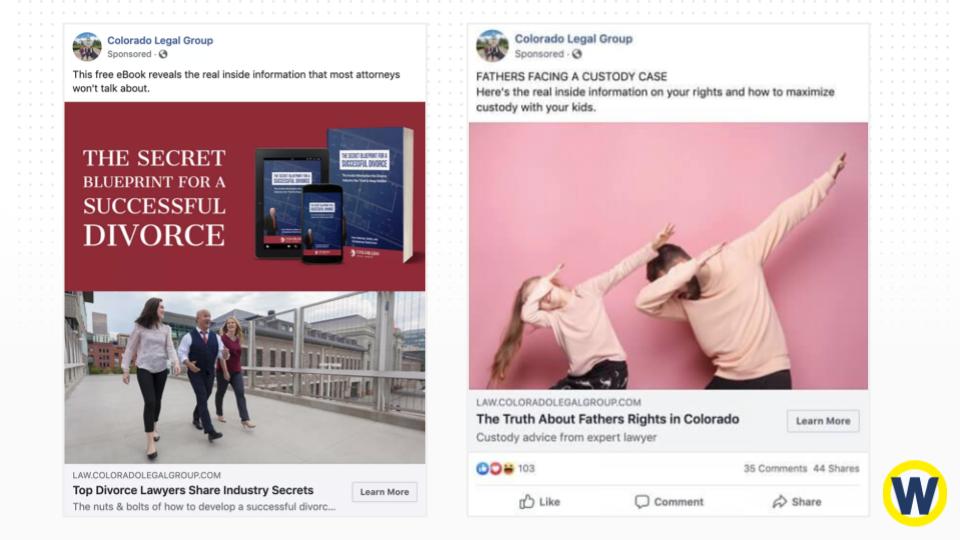

Facebook. Tiktok. Instagram. LinkedIn. All the popular social media platforms have ad programs available. Seems like a no-brainer strategy to get your law firm in front of a ton of people.But there's a caveat: social media users are there to socialize and scroll through their feeds to find interesting conversations. Not download an eBook or contact a service provider.

That means if you want to stand out, you need to be able to create video ads that engage and entertain.

That requires effort - if you don't have the bandwidth to make videos, then social media ads will be be effective for you.

But it makes sense—the need for an attorney isn't something you stumble upon. You don't see an ad on Facebook and think, "Gee, I do need a DUI lawyer right now."

When a person needs a lawyer, they head to Google and search for one. And this is an excellent example of the power of search engine optimization. Once you understand how to use it to your benefit, you'll find it's simple, cost-effective, and profitable.

Organic social is good for brand, not leads

Unfortunately, not all organic marketing is created equal. While SEO for lawyers works phenomenally on Google, you don't see the same impact on social media.Publishing content, engaging with others, and offering your expertise are excellent for brand building. But it's not the proper tool for growing leads and customers.

For one, it takes up a ton of time and energy (which you're already limited by). And two, it doesn't guarantee you'll reach a particular audience who's currently looking for legal assistance.

No one sees your ads on bus benches, radio and billboards

Traditional ads, such as those found on buses, benches, billboards, and even radios, are prominent in the legal industry. I consistently see bus benches plastered with attorney ads in Miami.But the reality is, most people aren't going to pay attention, let alone write down your phone number to call you. Heck, most people drive a vehicle and don't have the time or desire to.

Now, this isn't to say they don't have a place, because they do. For example, large law firms with hundreds of locations and thousands of attorneys make reaching the masses a valid goal.

At this level, your top priority is brand awareness, which makes reach essential. However, this approach won't drive immediate customers to your doorstep.

It's a long and expensive branding strategy. And honestly, they don't work all that well for revenue growth.

If your primary focus is acquisition, then your best bet isn't on out-of-home ads.

Dive deeper: THIS Is The BEST Way To Generate Leads For Law Firms In 2026

SEO traffic is plentiful for law firms and converts the highest

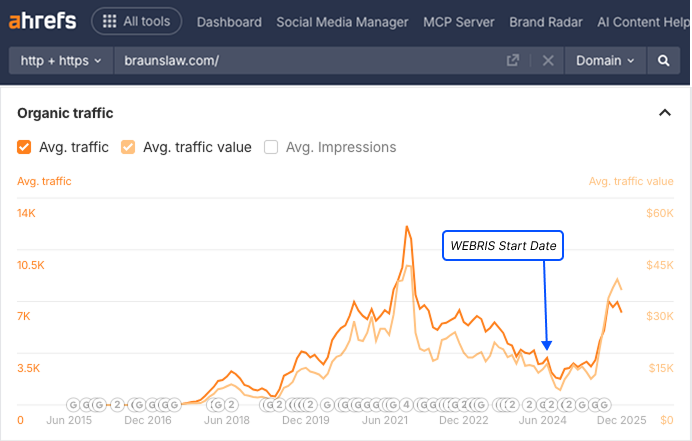

Organic traffic for lawyers is a gem that keeps on giving. It grows in perpetuity, unlike ads that require you to spend money to make money. Paid ad results are like a staircase—the more you want to earn, the more you must invest.The cost of acquisition continues to climb, making it harder to grow your ROI.

SEO for attorneys, on the other hand, continuously grows, thanks to the many keywords you can target. Eventually, you'll gain the infamous hockey stick growth.

At this point, your spending stays the same, while your return on investment increases.It's also worth noting that nearly 66% of calls in the legal sector stem from organic search.

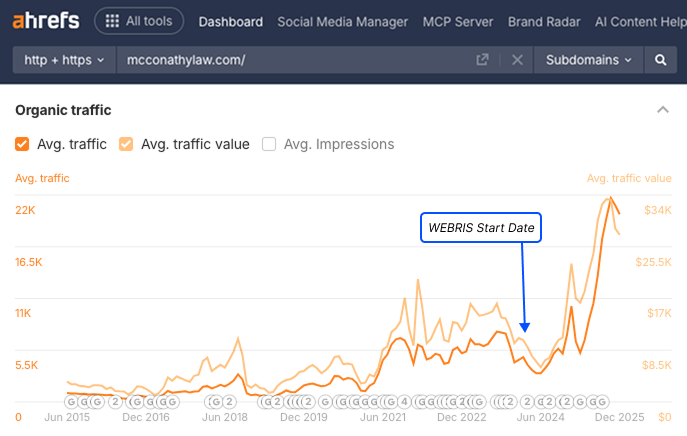

We can attest to this McConathy Law worked with us last year to grow its organic sessions by 200% and client call bookings by 178% using SEO.

Not bad, considering it's all from free traffic.

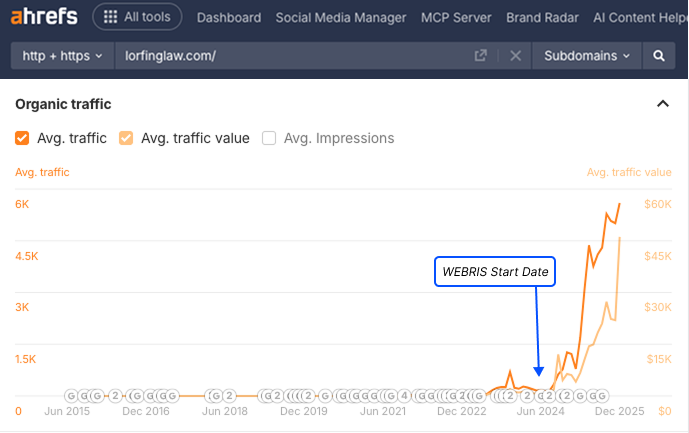

And this isn't a one-time thing—we see the same with all of our clients. For example, we're currently working with Keith & Lorfing, which we helped grow traffic for using free traffic:

Our proven SEO strategy for law firms

At WEBRIS, we're all about simplicity. We live and work by this concept using the Pareto Principle:Focus your time and energy on doing things that'll move the needle, and do it exceptionally well.It requires using 20% input to drive 80% of your output. This philosophy couldn't be truer for lawyer SEO strategies.

Here's our SEO process that has worked for hundreds of law firms over the last year.

1. Setting the foundation for SEO

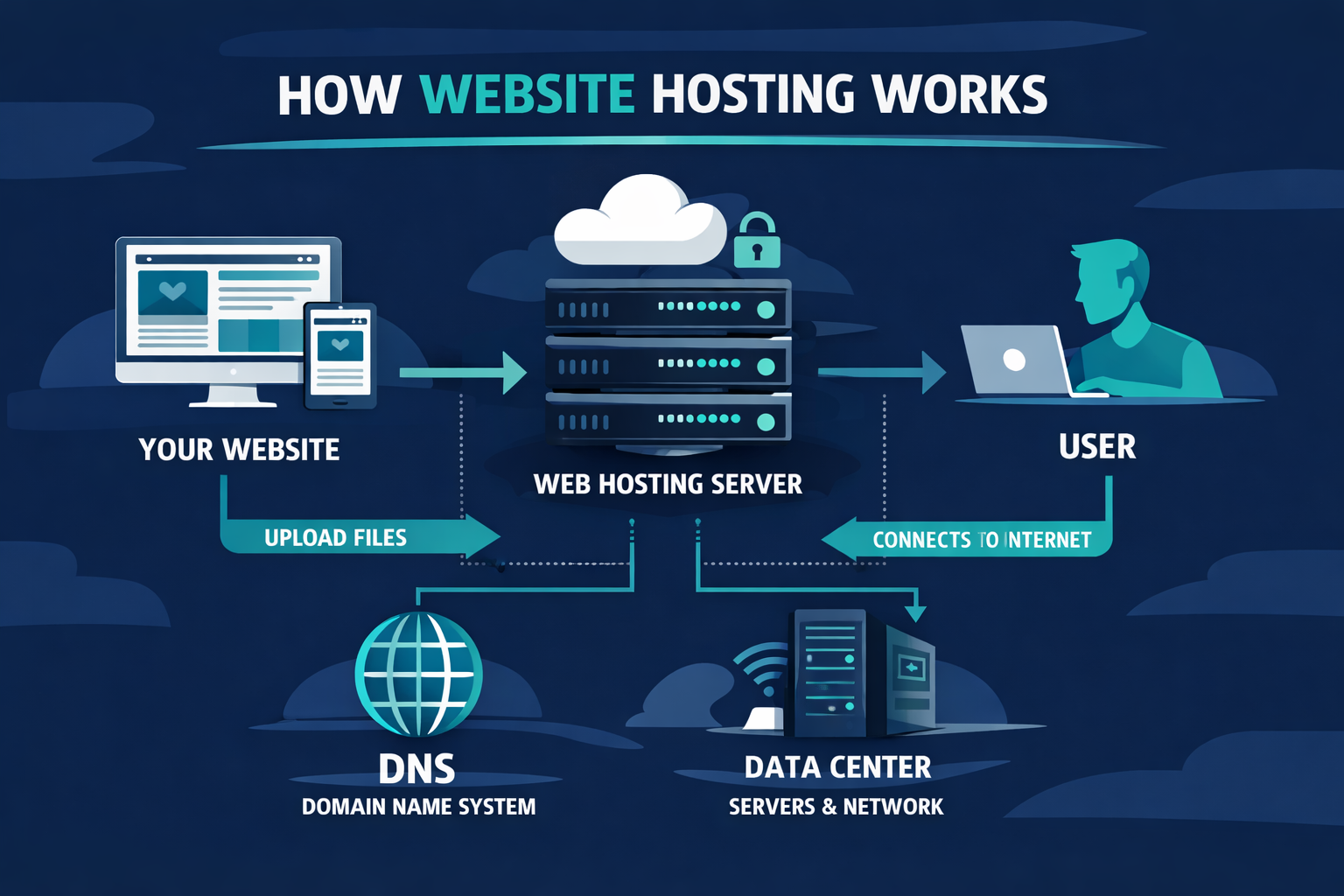

Before you can rank anywhere on Google, you need a website that's fast, secure, and—most importantly—owned entirely by you. Not your marketing agency. Not some third-party vendor. You.Why law firms need to own their hosting:

A lot of SEO agencies will try and sell you hosting bundled into their services. DO NOT FALL FOR IT. This is one of the most common ways agencies lock law firms into long-term contracts and hold their websites hostage.Here's how the scam works: The agency sets up hosting under their own account, builds your website on their hosting, and charges you monthly.

If you ever want to leave, you discover they own your website files, domain, and all your content. You're forced to either stay with them or start completely from scratch.

Your law firm needs to own your website, your domain name, your hosting account, and all your digital assets. This gives you the freedom to switch agencies, hire freelancers, or bring marketing in-house without losing years of SEO work.

How to set up your own hosting (in 4 simple steps):

Step 1: Choose a reliable hosting provider

Recommended hosts for law firms:- SiteGround ($30-80/mo): Excellent speed and support, WordPress-optimized

- WP Engine ($30-100+/mo): Premium WordPress hosting, best performance

- Bluehost ($10-25/mo): Budget-friendly for starting out

Step 2: Purchase your domain separately

Buy your domain (yourfirmname.com) from a separate registrar like Namecheap or Google Domains. Register it in YOUR name using YOUR email, not an agency's. This ensures you maintain complete control even if you switch hosting providers.Step 3: Install WordPress (takes 5 minutes)

Most hosts offer one-click WordPress installation through their dashboard. WordPress is the right choice for law firms because:- SEO-friendly out of the box: Clean code, mobile-responsive, proper URL structures

- Complete ownership: Open-source platform—you own everything

- Any developer can work on it: Never locked into one vendor

- Plugins for everything: 60,000+ plugins for forms, SEO, chat, and more

- Easy to update: Most attorneys learn basic WordPress management in an hour

Step 4: Enable SSL and choose a professional theme

Your hosting provider should include free SSL certificates (the "https" and padlock icon). This is required for Google rankings and visitor trust.Then select a professional WordPress theme designed for law firms. Good options include Divi ($89/year), Avada ($69 one-time), or law-specific themes from ThemeForest ($60-100). Look for mobile-responsive, fast-loading themes that are regularly updated.

What to tell marketing agencies:

When you hire an SEO agency or web developer, make your expectations clear: "We own our hosting and WordPress installation. We'll create a user account for you with appropriate permissions. We maintain ownership of all assets including the domain, hosting, content, and website files."

Professional agencies will respect this. Agencies that push back or insist on controlling your hosting are showing red flags.

Setting up your own hosting and WordPress site takes 2-3 hours and costs $200-500 to start. This investment gives you complete ownership, freedom to switch agencies anytime, and the foundation for all your SEO work.

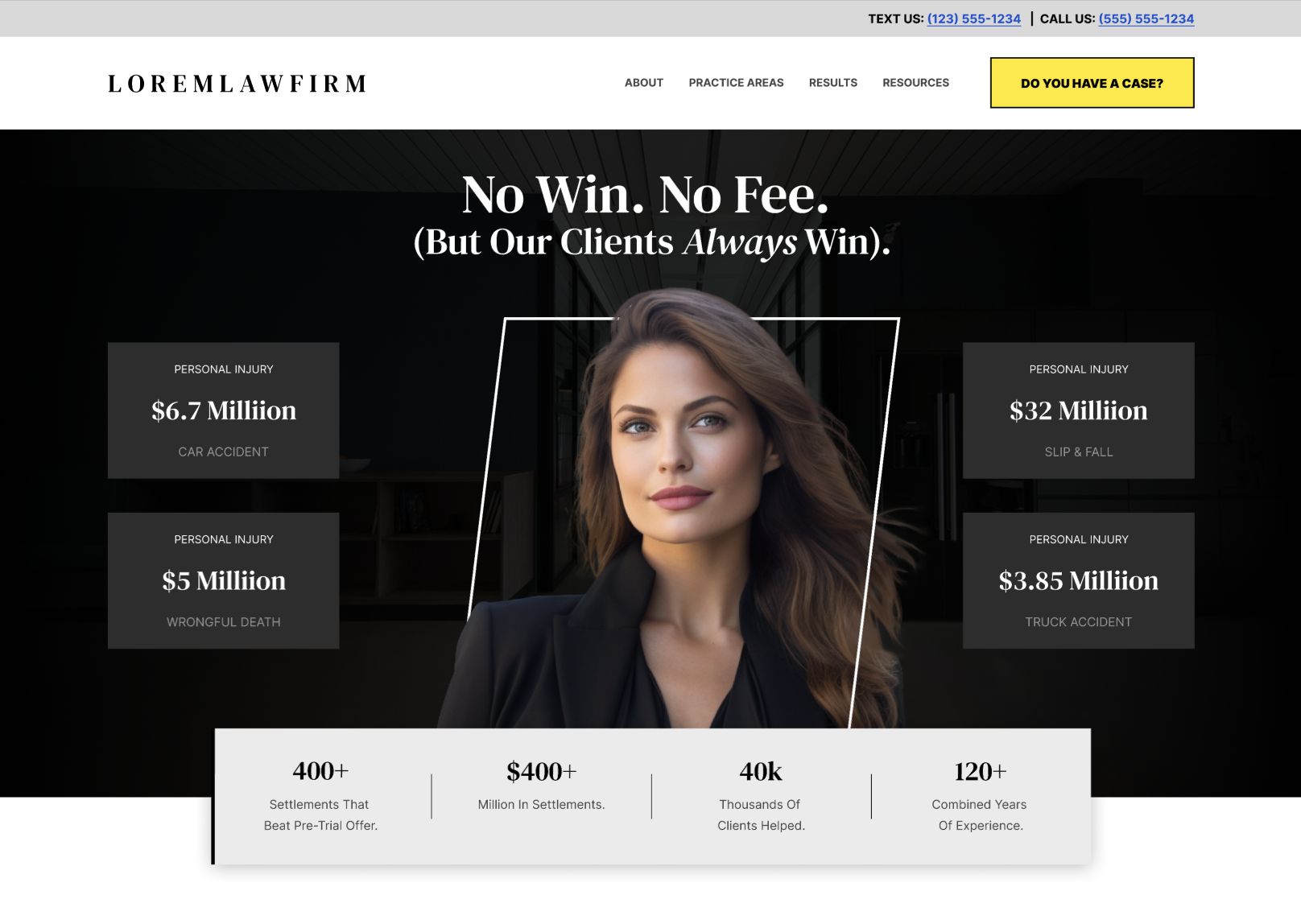

2. Designing a user friendly law firm website

A user-friendly website is essential for providing a good user experience and improving search engine rankings. But here's what most agencies won't tell you: good design doesn't necessarily mean more leads.Law firm websites need to prioritize clarity and function over flashy design. Your potential clients aren't looking to be entertained—they're stressed, they have a problem, and they want to know if you can help them.

What actually matters for law firm websites:

- Clear messaging – Can someone understand within 5 seconds what you do and who you help? If your homepage is filled with legal jargon and vague statements about "committed advocacy," you're losing people.

- Fast loading speeds – A beautiful website that takes 8 seconds to load will lose more clients than an average-looking website that loads in 2 seconds. Google also penalizes slow sites in rankings.

- Mobile-friendly design – Over 60% of legal searches happen on mobile devices. If your website doesn't work perfectly on phones, you're losing the majority of potential clients.

- Easy-to-read content – Use legible font sizes, clear headings, and plenty of white space. Legal topics are already complex—don't make your website hard to read on top of that.

- Secure and trustworthy design elements – Professional imagery, SSL certificates (https), and trust badges all signal legitimacy. The essential pages your law firm website needs:

Homepage – Your digital front door. This needs to immediately communicate who you are, what you do, and why someone should choose you. Include your phone number prominently, a clear call-to-action, and trust signals like years in practice, case results, or awards.

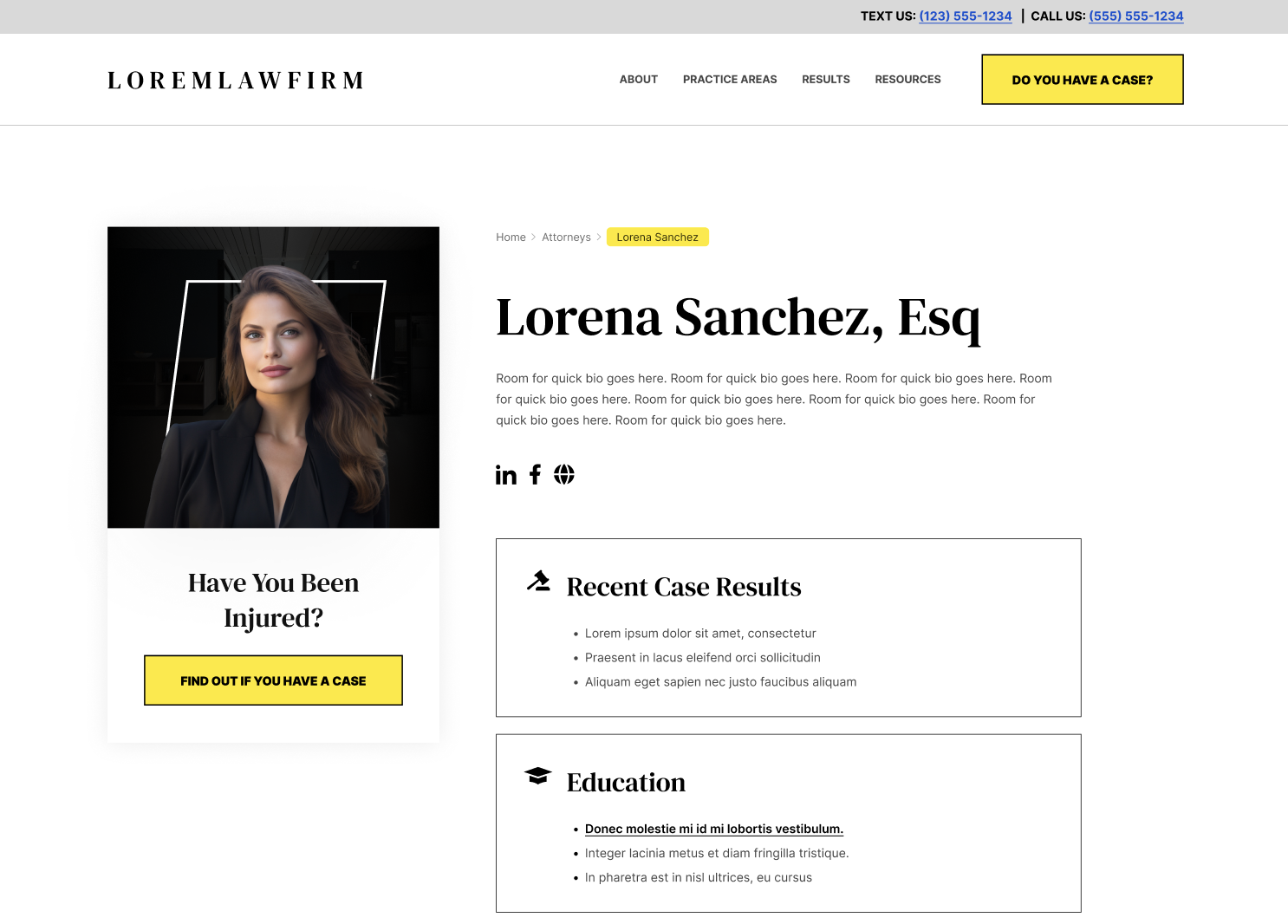

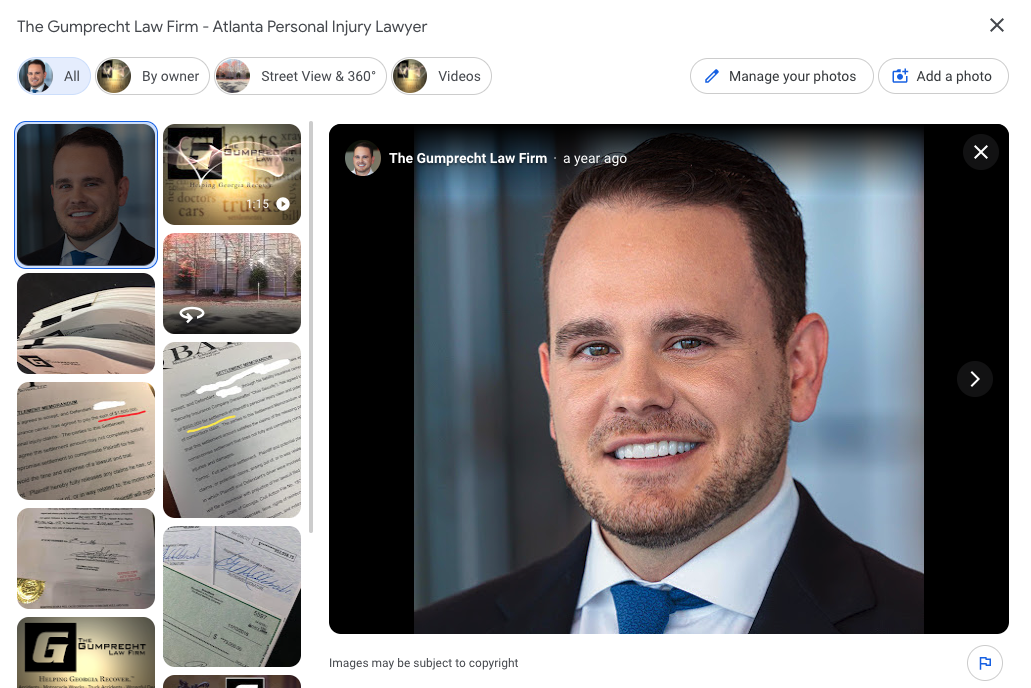

About Page – Often the second-most visited page on law firm websites. People want to know who they're potentially hiring. Tell your story, showcase your credentials, and build trust. Include photos of your team and office.

Attorney Bio Pages – If you have multiple attorneys, each needs their own dedicated bio page. These serve double duty: they help potential clients get to know your team, and they're powerful for local SEO.

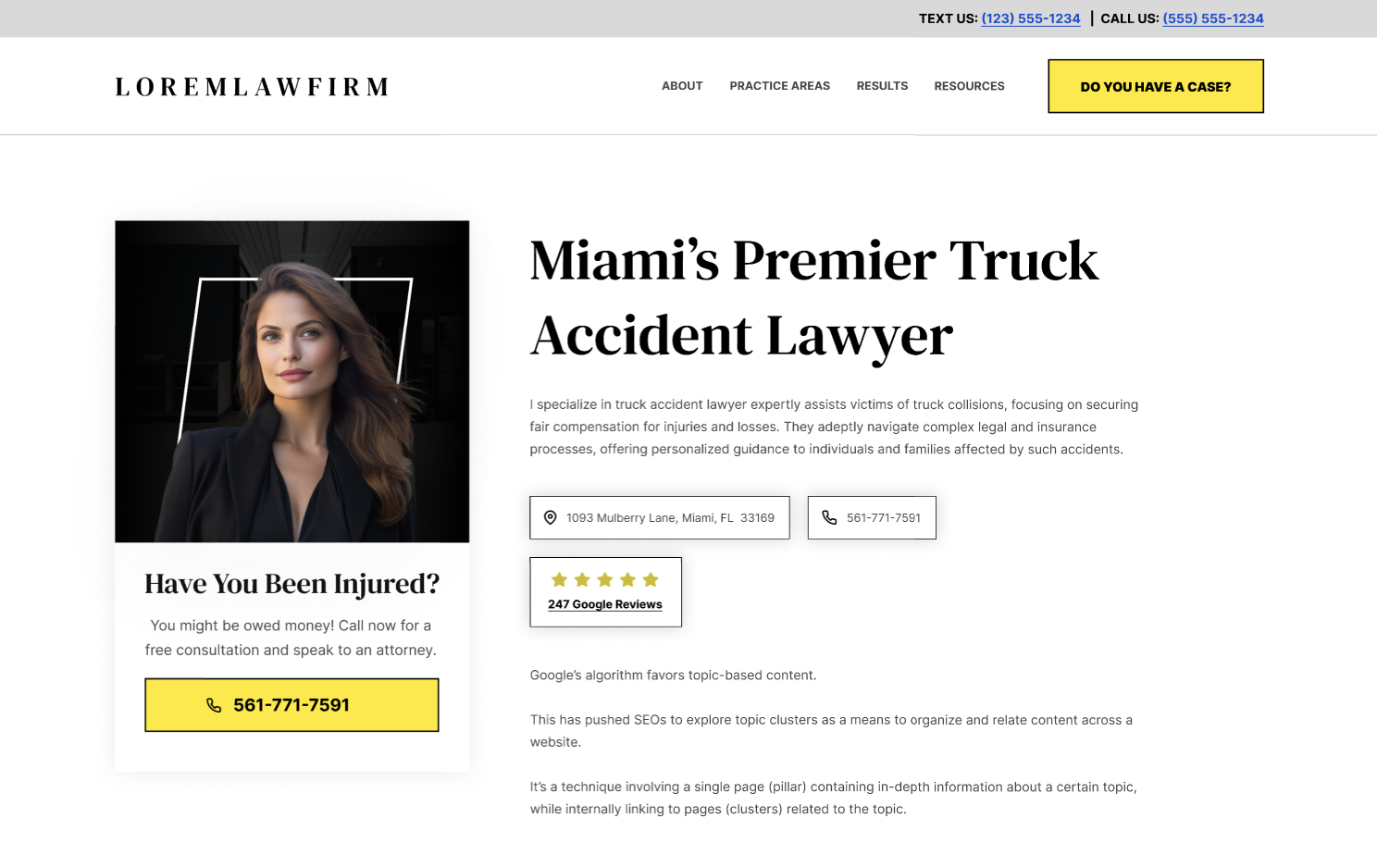

Practice Area Pages – This is where most law firms get their structure completely wrong, and it kills their SEO. Here's the right way to do it:

Think of your practice areas like a tree. At the trunk, you have your main practice area—let's say Personal Injury. This gets its own page: "Personal Injury Attorney in ."

The branches are your sub-practice areas. Under Personal Injury, you have:

- Car Accident Attorney in

- Motorcycle Accident Attorney in

- Slip and Fall Attorney in

- Medical Malpractice Attorney in

- Wrongful Death Attorney in

Why? Because each page targets a specific search query. Each page can rank independently. Each page serves a specific user intent. This is exactly how Google wants to see law firm websites structured.

If you have multiple office locations, you multiply this structure for each location. So if you have offices in Miami, Fort Lauderdale, and Boca Raton, you need practice area pages for each location.

Location Pages – For each physical office you have, create a dedicated location page with your address, phone number, map, directions, and parking information. This supports your Google Business Profile and local SEO efforts.

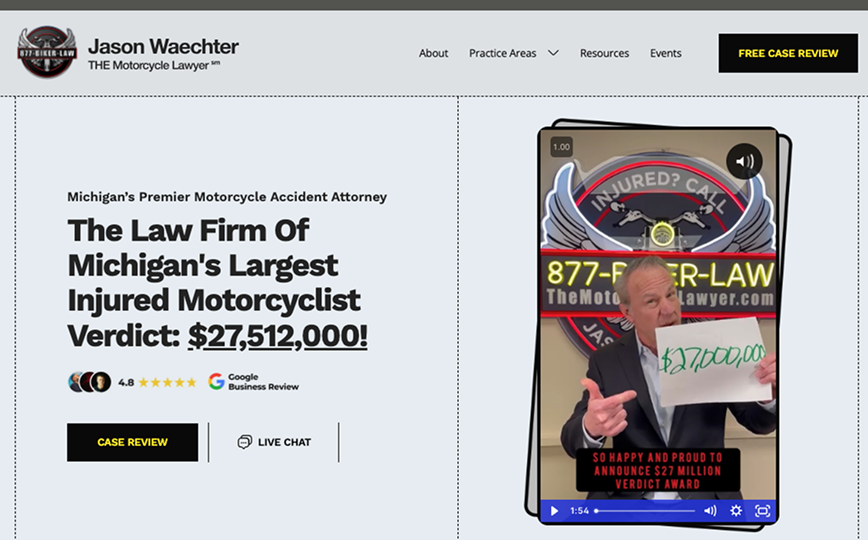

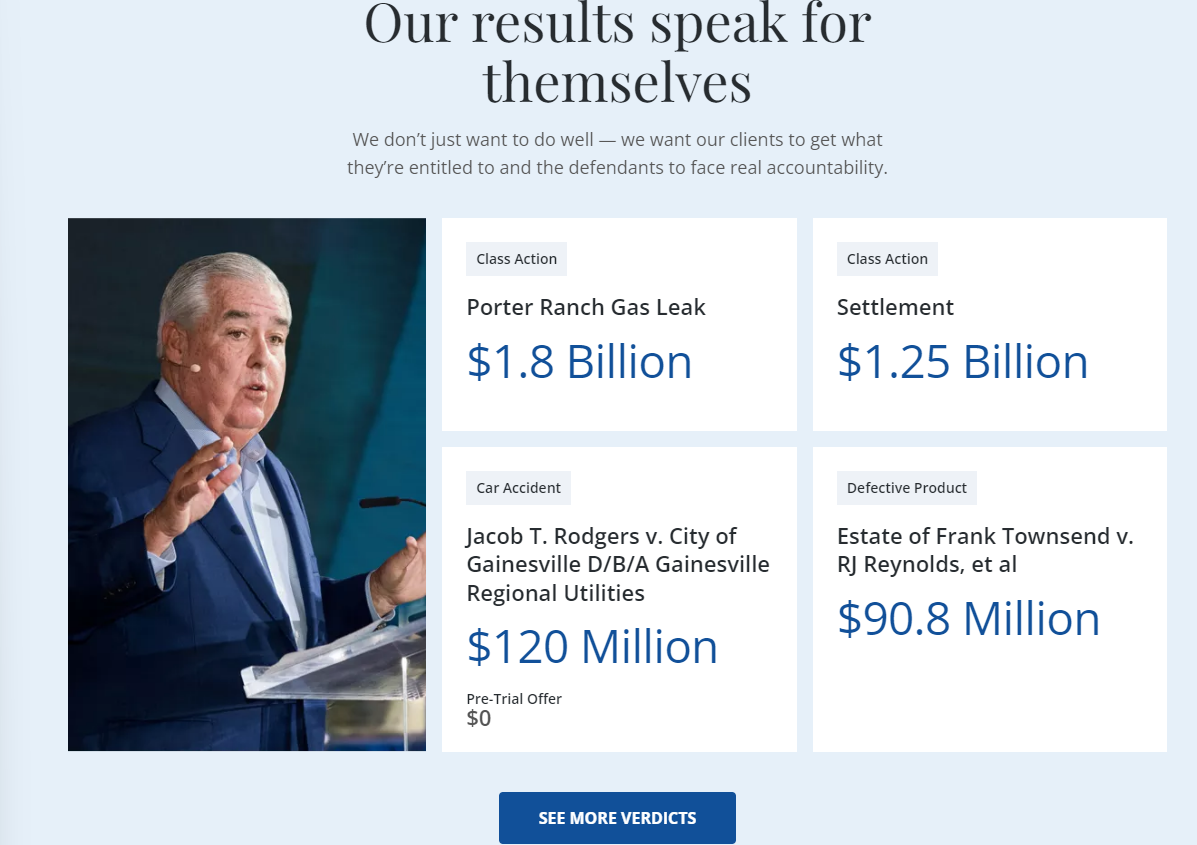

Results/Case Results Page – Social proof is everything in legal marketing. A page showcasing your case results, verdicts, and settlements builds credibility. Just make sure you follow your state bar's advertising rules about how results can be presented.

Blog – A blog allows you to create ongoing content that brings in organic traffic. Each blog post is another opportunity to rank for relevant searches and demonstrate your expertise. (We'll cover content strategy in section 5.)

Contact Page – This is where conversion happens. Make it easy. Include multiple contact methods (phone, form, email), office location with a map, and clear calls-to-action.

A well-designed, properly structured website will help establish trust with potential clients and improve your law firm's online credibility. More importantly, it will convert visitors into phone calls.

Dive deeper: Use this website design guide to save your law firm $50k

3. Dominate the Google Maps Pack

Here's a stat that should change your entire SEO strategy: over 50% of law firm phone calls come from Google Maps Pack rankings.Not from your website ranking on page one. Not from paid ads. From that three-pack of local results that appears with the map when someone searches for legal services in your area.

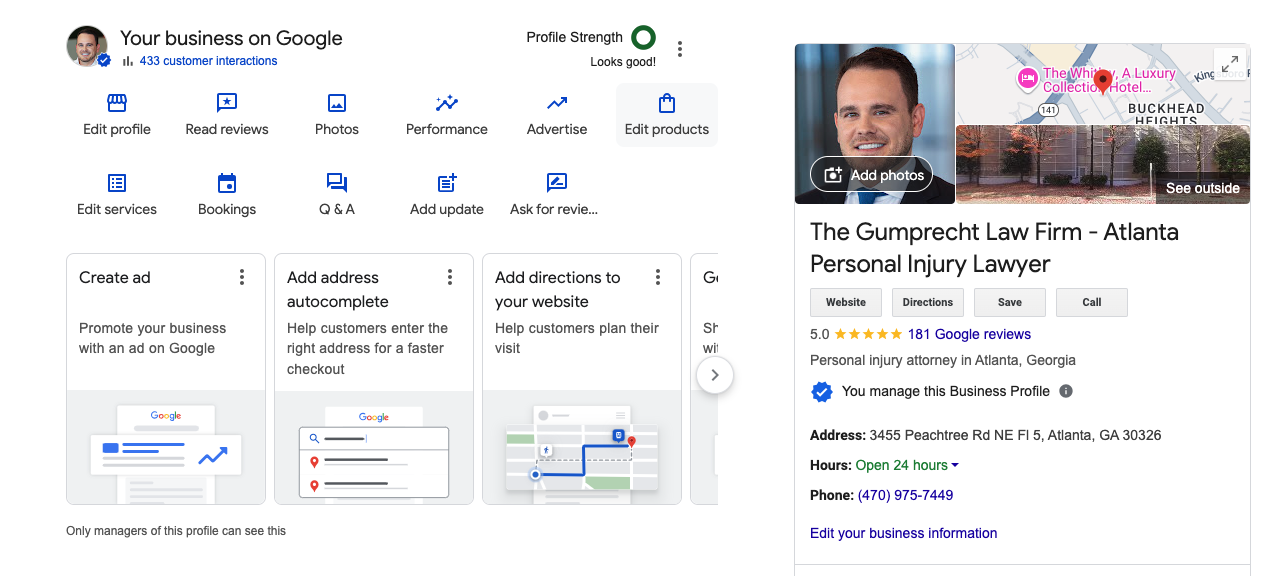

Your target customers are in your locale and use Google to find your legal services. So it makes sense to optimize your Google Business Profile. Doing so will boost your visibility in local search results.

Local search is when a search term includes a city, county, state, or other geographical terms to localize the results.

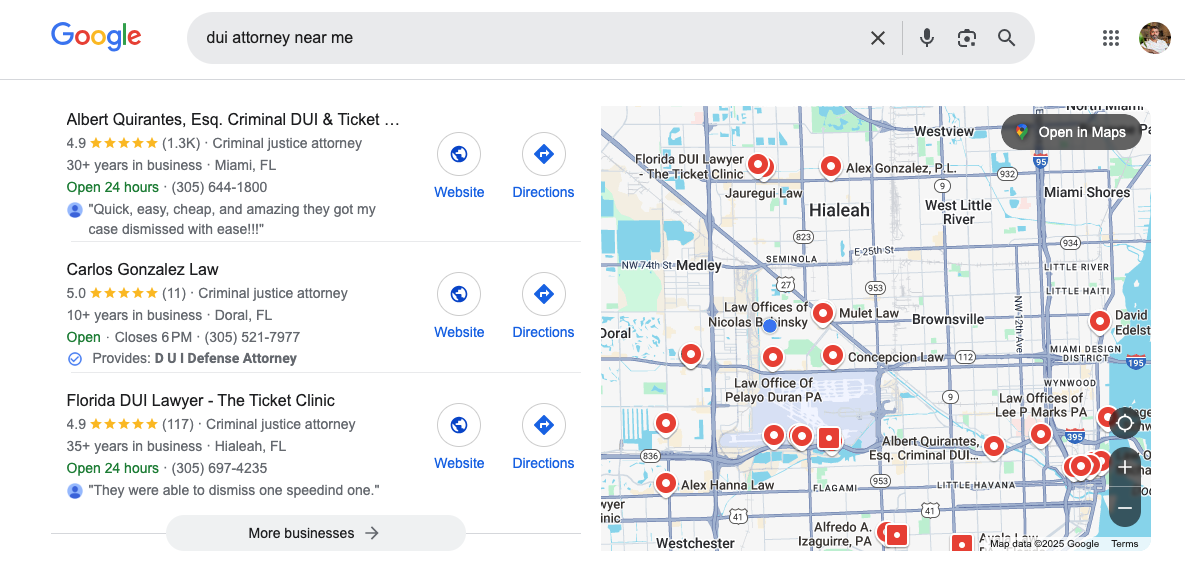

For example, when you type in "DUI attorney Miami," it triggers the Maps Pack:

It showcases a list of DUI attorneys in Miami next to a map. This should be a target because it includes your website link, business hours, phone number, and directions—perfect for guiding prospects straight to your office.

Why the Maps Pack is your most valuable organic placement:

- Visual dominance: The Maps Pack takes up massive screen real estate on both desktop and mobile, with an actual map, business photos, and critical information all displayed prominently.

- Trust signals galore: Star ratings, review counts, business hours, and proximity indicators are immediately visible—no clicking required.

- Click-to-call functionality: On mobile (where most legal searches happen), one tap connects the potential client directly to your office.

- Complete business snapshot: Your Google Business Profile functions like a mini-website within Google itself, showing services, photos, Q&A, posts, and more.

- Above the directories: You rank here before Super Lawyers, Avvo, Yelp, and other third-party sites that dilute your traffic.

To rank in the top three, you need a verified physical location and a business profile optimized for local search specifically.

Here's the complete optimization process:

Verify your physical address: Google will mail you a postcard with a code to verify. Post office boxes don't count—you need a legitimate office location.

Complete every section: Fill out 100% of your profile including business description, services, attributes, hours, appointment links, and booking options. Google favors complete profiles.

Choose precise categories: Select your primary category carefully (e.g., "Personal Injury Attorney" not just "Attorney"). Add all relevant secondary categories for your practice areas.

Optimize your business description: Write a keyword-rich description (750 characters max) that clearly explains your practice areas and geographic service area.

Implement a systematic review strategy: Reviews are the lifeblood of Maps Pack rankings. Solicit clients for positive reviews across the web using email outreach (Google, Avvo, FindLaw etc.). You need:

- A consistent system to request reviews from satisfied clients

- Reviews across multiple platforms (Google, Avvo, Yelp, Lawyers.com)

- Professional responses to every review (positive and negative)

- Fresh reviews coming in monthly—Google favors recency

Use Google Posts weekly: Publish weekly updates about case results (where appropriate), legal tips, news, or firm updates. This signals activity to Google.

Enable messaging: Turn on Google's messaging feature and respond within minutes. Response time is a ranking factor.

Build local citations: Claim or create business citations in local business directories (Yelp, Bing Places, Yellow Pages, etc.). Ensure your NAP (Name, Address, Phone) is identical across all directories: Avvo, FindLaw, Justia, and other legal-specific directories.

Add Q&A content: Use the Questions & Answers section to address common concerns potential clients have. This is indexed content that helps rankings.

Monitor with Google Search Console: Use Google Search Console to track organic traffic growth and diagnose technical issues, such as website indexing and sitemap submissions. This tool is essential for analyzing performance metrics effectively.

Google will use these indicators to determine your ranking position in local search and the Map Pack. Google evaluates Maps Pack rankings based on three primary factors: relevance (how well your profile matches the search), distance (proximity to the searcher), and prominence (your overall authority and reputation).

Remember: your Google Business Profile is not a "set it and forget it" asset. Treat it like a website that needs regular maintenance, fresh content, and continuous optimization. Law firms that do this consistently dominate their local markets.

Dive deeper: The Complete Guide To Local SEO For Lawyers

4. Optimize for AI search engines (GEO - Generative Engine Optimization)

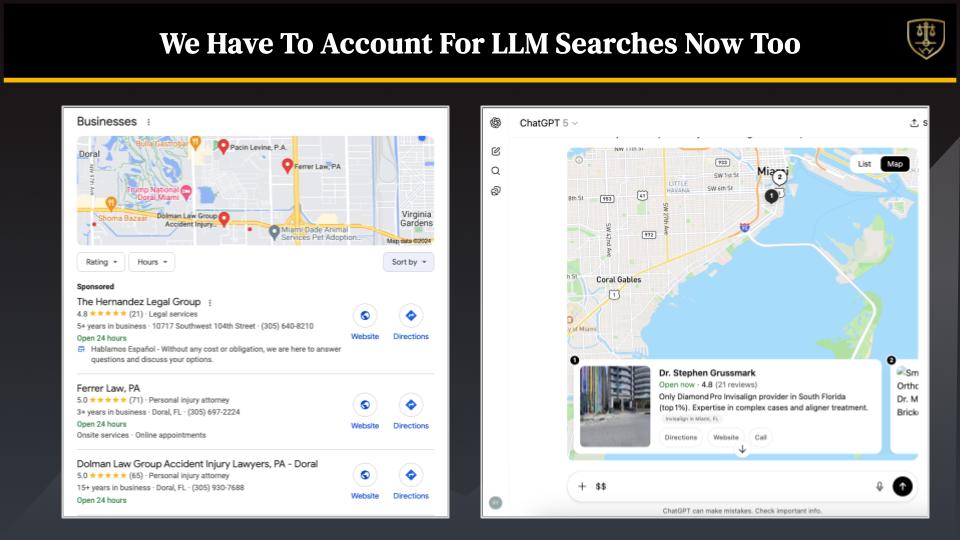

Here's something most law firm SEO agencies won't tell you: Google isn't the only search platform anymore.ChatGPT, Perplexity, Google's AI Overviews, and other AI-powered search platforms are fundamentally changing how potential clients discover attorneys. Be honest—do you use ChatGPT? Your potential clients do too.

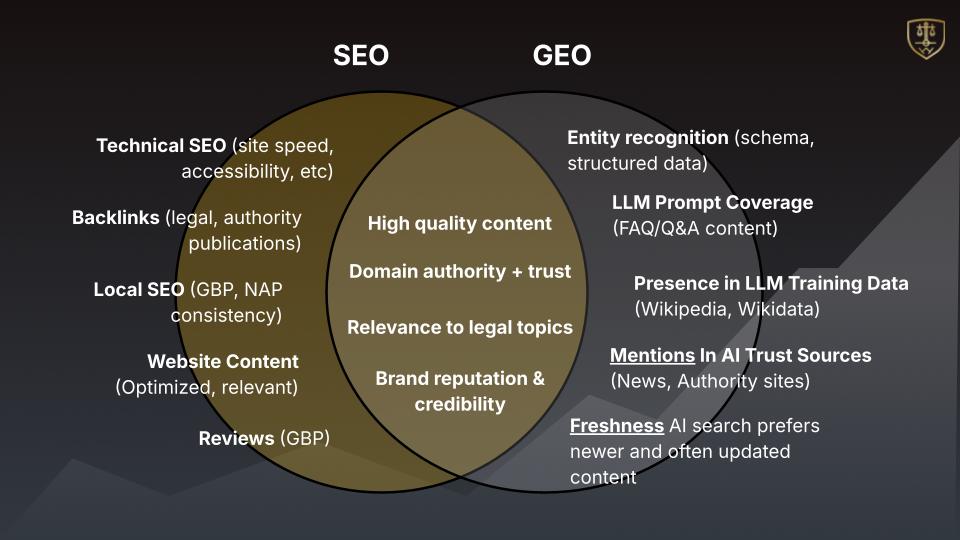

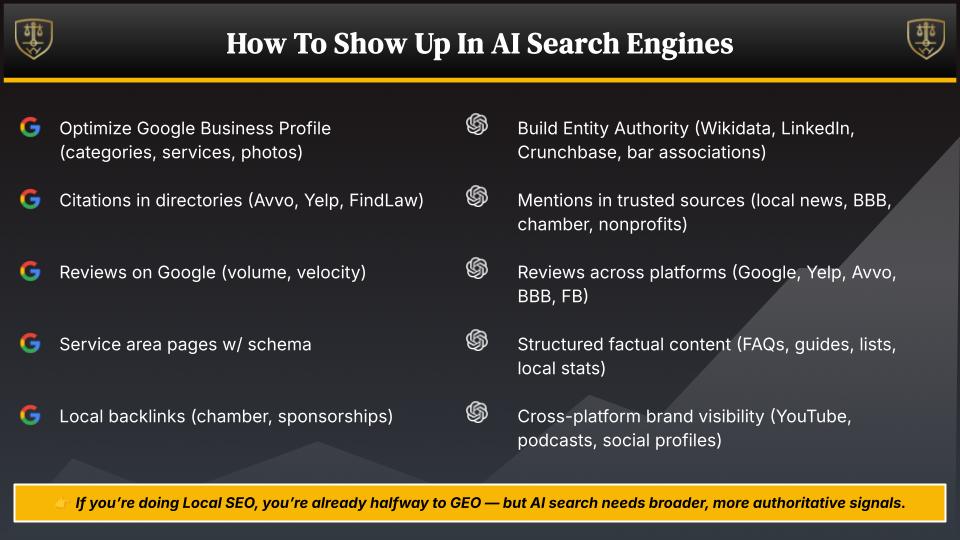

This is where GEO (Generative Engine Optimization) comes in. While SEO focuses on traditional search engines, GEO focuses on optimizing your presence for AI-powered search platforms that generate answers rather than just displaying links.

When someone asks ChatGPT "Who are the best personal injury lawyers in Chicago?", the AI generates a response by pulling from various online sources—not just websites. It considers:

- Directory profiles (Yelp, Avvo, Super Lawyers)

- Google Business Profiles

- Review platforms

- Legal publications and press mentions

- Website content

- Social proof and reputation signals

The AI doesn't just give them 10 blue links—it synthesizes information and recommends specific attorneys, often in a local Maps Pack-style format within the chat interface.

The good news: GEO and SEO overlap by about 80%. If you're doing local SEO correctly, you're already halfway there. But there are specific strategies to maximize your visibility in AI search.

Structured, entity-based information: AI platforms understand entities (people, places, organizations) better than just keywords. Ensure your website clearly identifies:

- Your law firm name consistently across all pages

- Your attorneys' names with proper credentials

- Practice areas spelled out explicitly (not just implied)

- Location information in structured formats

- NAP consistency everywhere

Comprehensive FAQ content: AI search platforms love pulling from well-structured Q&A content. Create detailed FAQ pages for each practice area answering questions like:

- "How much does a lawyer cost in ?"

- "What should I look for when hiring a attorney?"

- "How long does a case take in ?"

- Avvo

- Lawyers.com

- Justia

- Super Lawyers

- FindLaw

- Martindale-Hubbell

- Better Business Bureau

- Yelp

Focus on E-E-A-T signals: AI systems are trained to value Expertise, Experience, Authority, and Trustworthiness. Strengthen these by:

- Publishing case results and client testimonials

- Showcasing attorney credentials, awards, and certifications

- Contributing to legal publications and news outlets

- Maintaining active bar association memberships

- Demonstrating years in practice and case volume

- "What happens if I get a DUI in Florida?" (not just "Florida DUI penalties")

- "Can I sue for a slip and fall in a grocery store?" (not just "premises liability")

- Use natural, conversational language in your content

- Local news coverage

- Legal industry publications

- Community involvement announcements

- Press releases about case outcomes

- Expert commentary in articles

- LocalBusiness schema for your firm

- Attorney schema for individual lawyers

- LegalService schema for practice areas

- Review schema for testimonials

- FAQPage schema for Q&A content

- Google Maps Pack rankings

- Traditional organic rankings

- AI search visibility

- Reputation and trust signals

5. Clean up your law firm's website structure

The wrong website structure makes it hard for Google search engine bots to crawl, index, and rank your pages. So get this right early to prevent hurting your website's position in search engine results pages (SERPs).How do you optimize your site structure? It's more on the technical side, but isn't difficult. It's about having the right pages on your site:

- Home page

- Legal practice areas

- About Us

- Contact

- Results/Testimonials/Case studies

- Blog

- FAQ

- Scholarships/Sponsorships

- Spanish version of all pages

Along the top, you find the site structure. It includes everything we listed above and more.

Here's their results page, which is critical to have for obvious reasons:

And here's Rubenstein Law's practice areas page:

Don't overlook including additional pages, like an FAQ page to address concerns prospects may have that could block them from contacting your firm:

Categorize the questions based on practice areas or themes if you only have a single-practice firm. Not only does this help visitors find answers faster (without bothering your office)—it's also helpful for SEO.

Aside from capturing keywords for higher rankings, scholarship pages can help with getting backlinks from blogs, educational directories, and press websites.

The more sites linking to yours show Google your content is relevant and worth ranking high. Plus, it's a natural process—no need to reach out to sites to link back to your scholarship page.

Then based on where you're located, it's ideal to have a link to your site in a different language such as Spanish (or another prominent language in your target area). This is useful for folks who don't speak English as a first language, and it'll give you some SEO juice on Spanish Google.

Hire a freelance translator or use a translation tool, then use an editor to clean it up.

6. Create content that informs and educates

Here’s where you build the roads to drive traffic to your website. Content is what ranks best and what people search for on Google.Understanding the importance of SEO for lawyers is crucial in creating targeted content that improves online visibility and attracts potential clients.

So you’ll need to develop informative blog posts to educate your audience and potentially guide them to a conversion. The idea is to help visitors understand legal processes and how your firm may help them with their situation.

Note that many of your site’s visitors will include folks who aren’t ready for your services. These are people who are playing the field, looking for answers before determining they need legal representation.

Ideally, you want to publish at least one blog post weekly. For example, my agency builds 20 pages, schedules them to publish weekly, and then promotes them to legal audiences.

Consistently publishing content shows Google your site is fresh and relevant. And you may even get backlinks from other sites that find your content valuable.

Now, you have these folks in your ecosystem, but remember—this isn’t direct marketing traffic. When someone sees a blog post, they don’t instantly become a customer. So the goal is spreading your footprint and growing that exponential hockey curve.

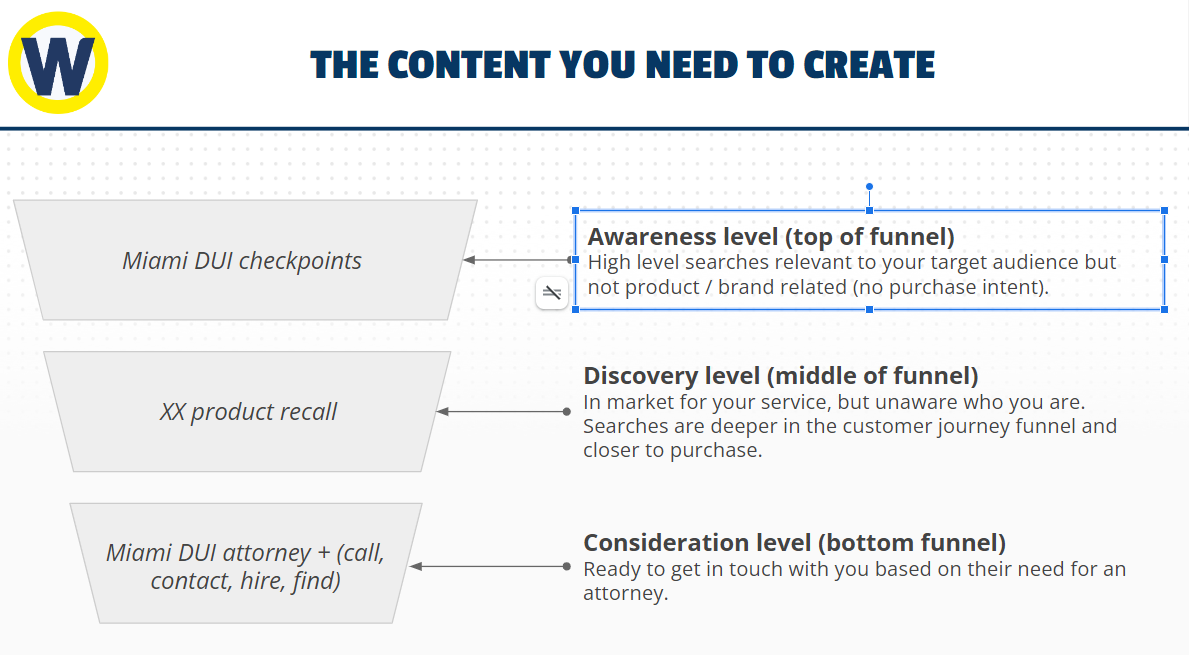

This is where informational content comes in. Question is, “What type of content should attorneys write?” There are three types of content lawyers should create to build a funnel that guides visitors through the customer journey:

Everyone that visits your site won't be at the same stage. By crafting content for each, you can guide each person closer to the end goal: a conversion.

Top of the funnel content

Top of the funnel visitors aren't aware they have a legal issue. So these are the least likely to convert at the get-go. They conduct high-level searches unrelated to your law firm or service. So they're not looking for an attorney at this point.Yet, they may look up DUI checkpoints in Miami. Why? Because they likely had a DUI in the past and want to prevent getting another when they go out partying tonight. It's unfortunate, but this puts these individuals in the market for your services in the future.

By creating content around this topic, you put your law firm on their radar for when they land in jail for a DUI. Lawyer SEO is crucial in optimizing legal websites to enhance their visibility in organic search results.

Middle of the funnel content

People in the middle of the funnel are aware of their problem and are looking for a solution. So the intent of their search is deeper and closer to a purchase. For instance, if they look up a product recall, odds are they purchased the item and saw the recall on the news. Source

Source

Now, they’re on Google looking for an attorney’s help. At this stage, they’re looking to discover solutions to their problem. And since there are a lot more people in the middle who are a step away from a conversion, you want to focus 80% of your content here.

This isn’t just about scaling traffic (like at the top of the funnel)—it’s about scaling phone calls to your office. SEO marketing for law firms is crucial in increasing online visibility and attracting potential clients.

Bottom of the funnel content

When a person understands there's a problem and the solutions available, they're ready to pay for help. They're one step from a conversion, giving you plenty of opportunity to attract their attention and consideration.This is when people zone in on content in the middle funnel, which should drive them to relevant bottom of the funnel pages, like:

- Service pages

- Results pages

- Contact page

Your content at the awareness and discover stage should guide traffic through the funnel to scale your growth exponentially.

Here's an example of how a DUI attorney's funnel may look:

- Awareness: "New DUI Law and How it Affects Arizona Drivers" with a link at the end to a middle of the funnel post

- Discovery: "5 Things to Avoid When You Get Arrested for DUI" with a link at the end to a case study page

- Consideration: "How Our Law Firm Helped Hundreds Walk Free After a DUI" with a link at the end to the contact page

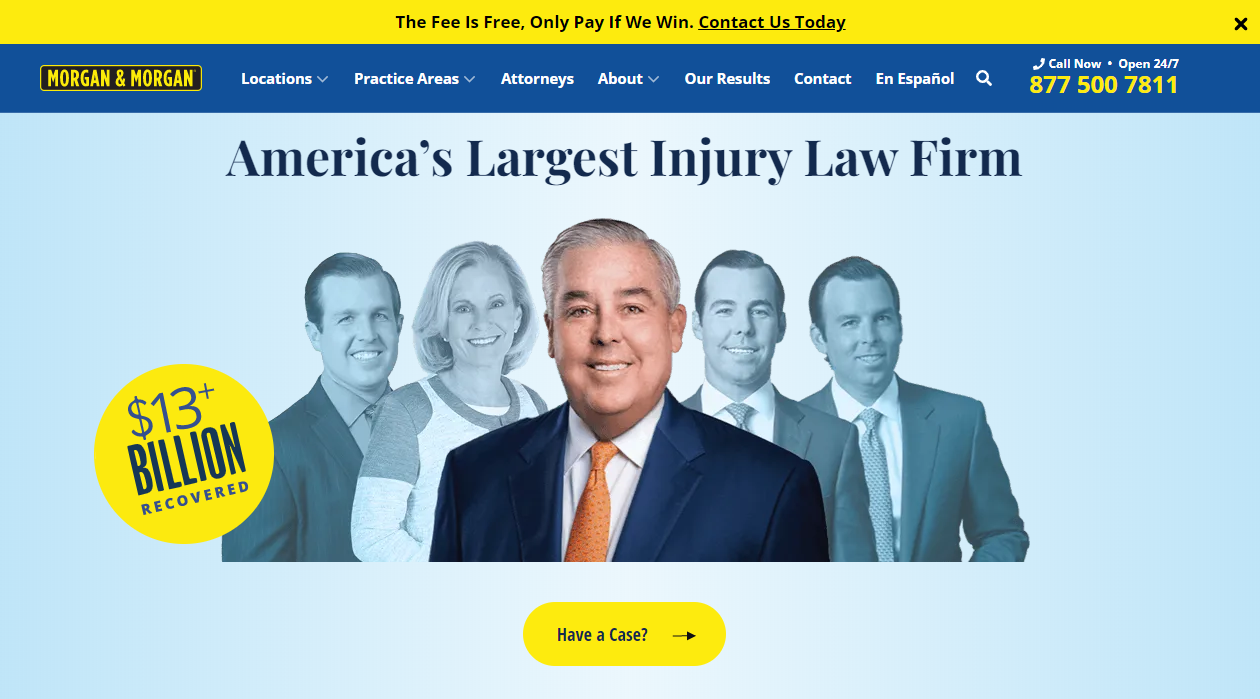

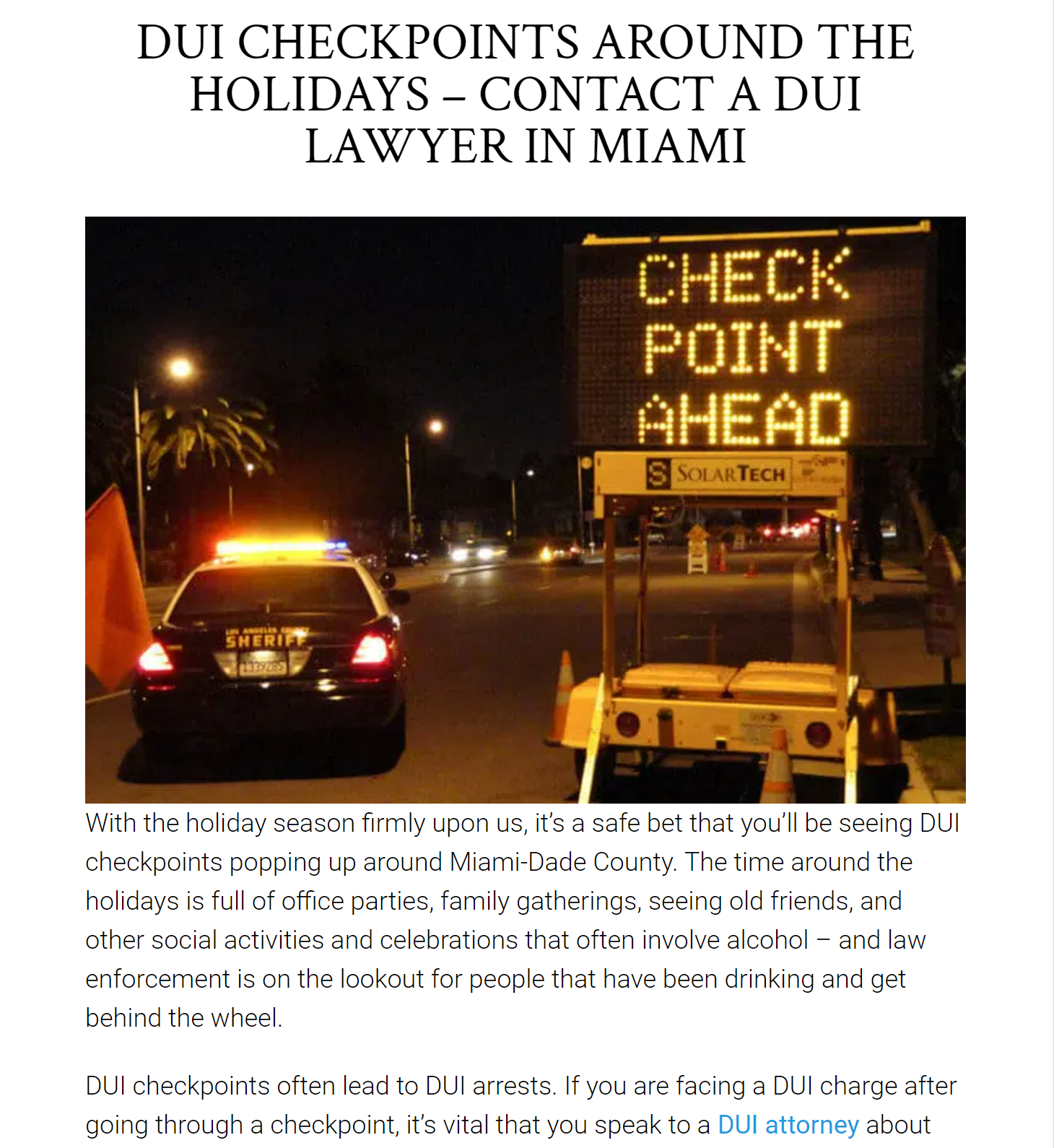

In this example, Morgan and Morgan has a personal injury service page with a call to action to fill out their form for a free consultation:

7. Building backlinks to your pages

Website structure helps Google crawl your pages and content targets keywords to help your site rank. Now, it's time to build your site's authority in the eyes of Google and your visitors. Because with authority comes trust, which increases your ranking and conversion potential.When a blog post is mentioned on a credible legal blog or press site, it tells Google your site is trustworthy. It's like being vouched for by a known celebrity. Plus, it drives traffic from these sources, further proving your relevancy.

Creating high-value content filled with expert insights and actionable tips makes it easier to get backlinks from notables, like news sites and law magazines. The more links (aka votes) you get, the more it helps your ranking.

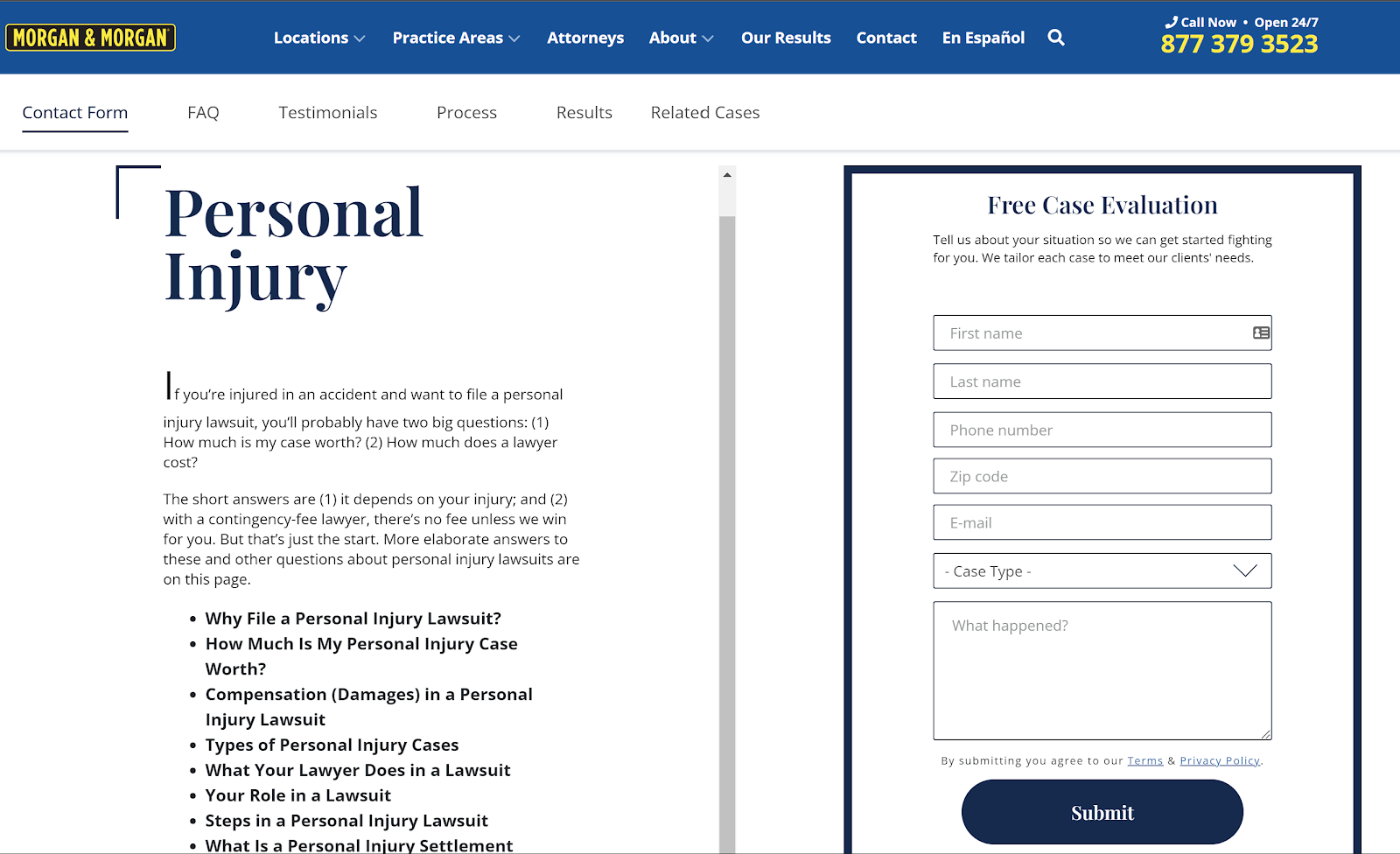

But not all links are created equal.

In the chart, sites in the lower left quadrant are from low authority and low traffic (not a suitable backlink). Then in the upper right quadrant, you have Forbes with high traffic and authority (the best link possible).

So a backlink from Forbes gives you a higher vote of authority than getting one in a low traffic, low authority site. In fact, Google may not count this backlink at all. So your focus should be on gathering links from sites with high traffic and/or high authority—and that's relevant to your industry and audience.

It's more powerful to get a backlink from a legal journal than a technology website.

Now, there are three types of backlink building attorneys should focus on:

- PR links: Public relations agencies work to get you articles on news sites (e.g., Forbes, Bloomberg, etc.)

- HARO links: Help a Reporter Out is a resource to find articles to contribute quotes to in exchange for a backlink to your website

- Legal websites/blogs: Non-competitive websites in the legal niche that link to your articles, include your quote, or that publish your guest post

| Link Type< | Pros | Cons |

| PR Links |

|

|

| HARO Links |

|

|

| Legal Blogs |

|

|

We use a combination of all three to help law firms scale backlinking rapidly. I even have a database of legal sites we worked with before that I reach out to for writing and quote opportunities.

The outcome is increased traffic and conversions for our clients.

SEO is awesome, but requires a lot of work—we can help

You’re an attorney with an endless to-do list. While SEO is proven to help law firms scale, it requires a lot of time and effort you can’t afford to spend.This is why we recommend partnering with an attorney SEO agency that understands your industry and audience. It ensures you get all the benefits of implementing in-depth SEO strategies. Law firm SEO services are crucial in developing effective SEO strategies tailored to the specific needs of law firms.

We’ll analyze your data from Google Analytics, top competitors, and the total addressable market. Then we run growth models to see the expected return for your investment in our firm.

When you hire us, you know what to anticipate in return for your investment.

Interested in learning more? Contact our growth team to learn how we can scale your law firm.